Reimagining Radio in the Age of Generative AI

UX Researcher (1 of 2) | Mixed Methods, AI Strategy & Responsible AI

TL;DR: Reframed iHeart’s GenAI exploration from “feature experimentation” to bounded integration grounded in listener trust and passive listening contexts. Co-led multi-phase research and developed the first audio-specific Responsible AI framework, translating emerging risks into product guardrails that enabled safe public deployment of GenAI in radio.

RELEVANCE OF THIS WORK TO PRESENT DAY

As GenAI moves from experimentation to integration, the core question is no longer what AI can do, but under what conditions it should operate.

This project tackled that question inside audio where trust is fragile and attention is low long before radio-specific governance patterns existed. “Human-AI interaction guidelines” existed broadly, but none were tailored to audio experiences.

Instead of asking “What AI feature should we build?”, we reframed the decision space:

? Where can GenAI add value without eroding the “human host” moat?

? How do we innovate without destabilizing relational trust?

? What guardrails are required if GenAI goes public in audio?

? How do we compete in AI without reactive mimicry?

This reframing prevented solution-first design and anchored the work in risk-informed innovation.

THE DECISION GAP

My role: Introducing GenAI into a trust-based, commute-driven medium posed both competitive opportunity and systemic risk.

As one of two UX Researchers working alongside two Designers, I helped define the conditions under which AI could responsibly exist in this ecosystem before experimentation scaled.

My contributions focused on:

Co-architecting the multi-phase research strategy.

Leading synthesis across survey, interviews, and workshop.

Leading development of the Responsible Audio AI framework.

RESEARCH CONSTRAINTS

This work needed to operate within real organizational constraints:

Audio AI can hallucinate, mislead, or feel “too human” without consent.

Trust Risk

Most users listen during commute/driving (76% Live Radio usage; majority commute-based listening).

Low-Attention Context

Uneven AI Readiness

Familiarity with AI-supported audio dropped below 50% (vs high familiarity with AI in general).

Repeatable Governance

We needed mitigations that could scale beyond a one-off prototype.

My co-researcher and I built a funnel from broad → narrow → build to avoid novelty-driven exploration:

Secondary Research to map current AI-audio use cases and known concerns like misinformation, loss of “human essence,” ownership.

1.

RESEARCH STRATEGY (CO-LED)

Exploratory Survey (N=100) to establish listener baseline and AI perceptions.

2.

Exposure-Testing Interviews to identify where acceptance increased and where it collapsed by testing reactions AI news, AI podcasts, voice likeness, and AI DJ formats.

3.

Co-Design Workshops to generate and pressure-test community-based experiences, ethical boundaries, and governance expectations

4.

Concept & Usability Testing to iterate on voice modes, customization controls, and accessibility considerations.

5.

iHeart wanted to explore AI-integrated audio content. But In late 2023, when generative AI began rapidly entering consumer media, “AI in radio” was uniquely risky:

Voice is intimate and human-coded.

Consumption is often passive (e.g., commuting/driving).

Credibility failures are expensive in a trust-based medium.

No radio-specific Responsible AI principles existed.

Meanwhile, competitors were already launching AI DJs and automated radio experiences, and risks of using AI like misinformation, bias, and loss of “human essence” were being uncovered.

This wasn’t feature exploration. It was high-stakes innovation under uncertainty.

THE CORE PROBLEM

KEY INFLECTION: CONDITIONAL OPENNESS (LED SYNTHESIS)

Across the survey and interviews, a consistent pattern emerged:

Users were not resistant to AI.

They were conditionally open.

AI that supplements vs replaces (news/weather, translations, customization).

What worked

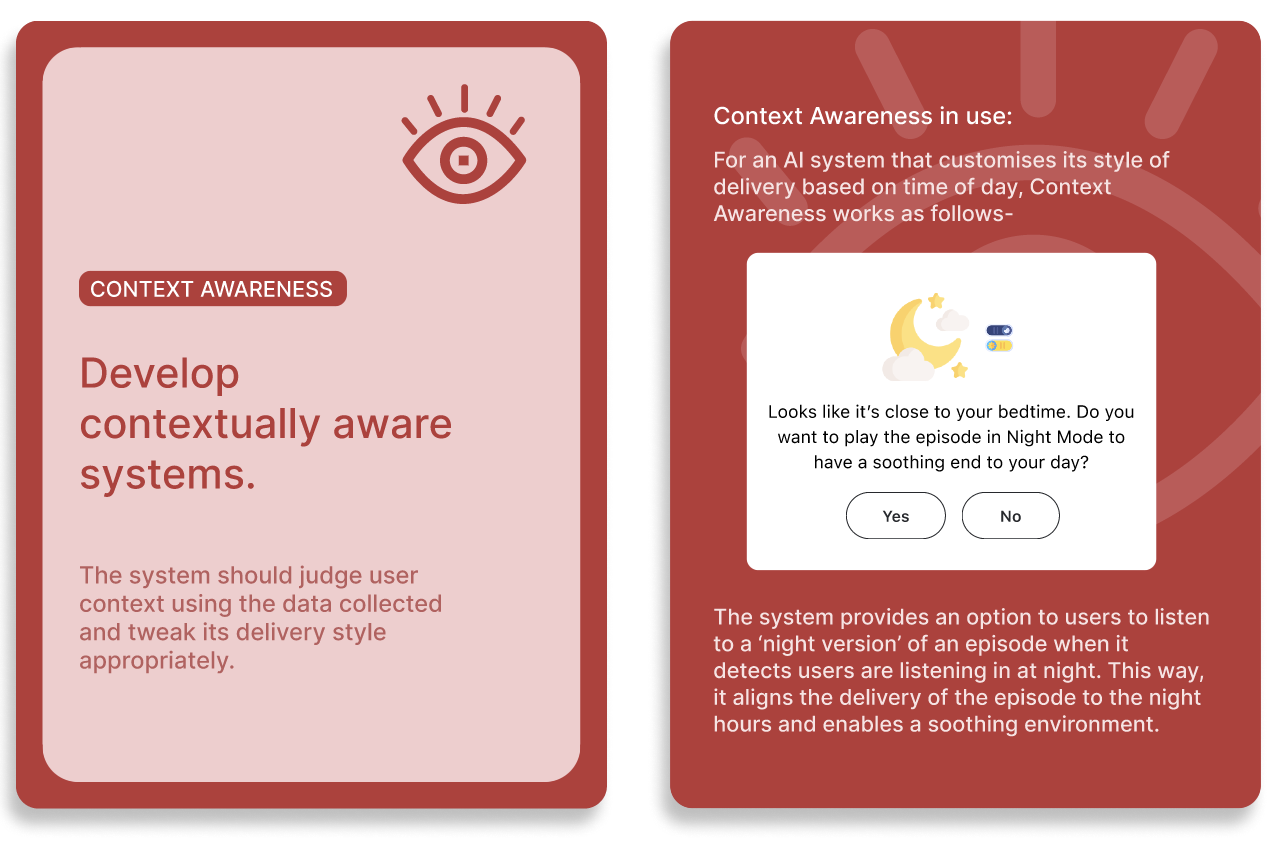

Context-aware personalization when transparently signaled.

Preservation of human connection.

What did not work

Unlabeled AI hosts.

Likeness recreation without consent.

Hallucination risk.

Misuse by bad actors.

Privacy ambiguity.

This revealed that acceptance of AI in audio was not binary. Acceptance depended on whether AI augmented human connection or attempted to replace it. These patterns directly informed the strategic decisions that shaped the product direction.

KEY JUDGEMENT CALLS

We prioritized augmentation over AI-host replacement.

Tradeoff: Less novelty → Higher brand fit + adoption likelihood.

1. Supplement, Don’t Replace

2. Anchor in Community-Led Personalization

Rather than a grab bag of AI utilities, we converged on community channels, preserving ritual and connection while using AI for scalable facilitation.

Tradeoff: One strong directional bet → instead of multiple shallow experiments.

3. Responsible AI for Audio-AI Systems (Led end-to-end)

Impact assessment of the idea surfaced harmful vectors:

Privacy risk.

Bad actors.

Hallucinations.

NLP/IP Misuse.

Recognizing the governance vacuum, I authored and operationalized a Responsible Audio AI framework to translate research risks into deployable guardrails:

Synthesized cross-industry AI principles

1.

Identified audio-specific risk categories (voice mimicry, parasocial attachment, tone manipulation).

2.

Drafted the initial framework.

3.

Facilitated structured feedback with senior researchers.

4.

Translated principles into actionable design constraints which were enriched by primary user research (7 principles).

5.

These were then mapped into design system changes like:

Approval flows.

Data usage settings.

Role structures (Admin, Moderator, Member, AI Host).

Clear AI labeling.

Tradeoff: Time invested in governance reduced prototype polish but created a credible path to ship.

We proposed using AI as community infrastructure that delivers Personalized Community-Driven Radio Stations. These were built using key system decisions including:

THE PROPOSED DIRECTION

Shared-interest group channels in community-driven personalized stations.

Clear role hierarchy.

Public or private communities.

Explicit AI transparency and control signals.

Scalable by community type/size.

User-controlled customization boundaries.

This repositioned GenAI from synthetic performer to connective scaffolding. Strategically, this reduced reputational risk while preserving competitive differentiation.

Defined the strategic conditions for GenAI in radio, grounding innovation in real listening behavior and shaping AI-driven community radio experiences.

IMPACT

De-risked public-facing AI experimentation by translating harm vectors into product guardrails including transparency signals, role hierarchies, and data controls.

Established the first Responsible Audio AI guidelines, embedding governance into product development and guiding future audio-AI initiatives.

GenAI in audio changes trust dynamics because audio feels human and is consumed passively. A viable strategy must couple innovation with actionable governance. This project demonstrated that competitive AI strategy in high-trust ecosystems requires structural constraint.

WHY THIS MATTERS

WHAT I’LL CARRY FORWARD

Here are some key takeaways from this study that taught me more about research-

AI strategy is constraint strategy. The strongest work is often deciding what not to automate.

In GenAI, safety is UX. Approvals, labeling, and controls are interaction design, not policy. Governance and experience design must co-evolve.

Responsible AI must be operationalized. Principles only matter when mapped into product decisions and reusable tools. In emerging AI systems, my role is to define the conditions that make innovation both responsible and durable.